There’s going to be an XML Prague in 2012, and I’m going to be there, again. Already looking forward to it. Not enough XML geekery for me lately.

Category Archives: XML Prague

XML Prague 2012

Speaking of XML conferences, XML Prague 2012 has been announced and will take place a month earlier than the last few times, on February 10-12. The venue is also new, a good thing since the last two events were sold out.

Looking forward to this one already.

An Even-Simpler Markup Language?

in his blog, Norman Walsh writes about an even-simpler-than-Mixro-XML markup language, inspired in part by John Cowan’s XML Prague poster and by James Clark’s Micro XML ideas. His ideas are well worth a serious consideration–Norm’s ideas are always worth considering–but the purist in me cringes at the idea of allowing more than one root element. I have to say that I find the idea attractive but I’m not really big on change so maybe that is why I hesitate.

The pragmatist in me, on the other hand, also cringes at Norm’s not doing away with namespaces when he has the chance. in my experience they always create more problems than they solve, but on the other hand, my experience tends to be more about strictly controlled environments where the issues one usually wishes to solve using namespaces can be dealt with using other means.

Until Next Year, XML Prague

This year’s XML Prague is over and I miss it already. For a markup geek, XML Prague is heaven. There is always so much to learn, so many great minds and cool new ideas, not to mention Czech beer and the friendly atmosphere of a smaller conference. This was my third consecutive year attending and I very much look forward to the fourth.

Some notes of interest:

- XML Prague is a great success. The conference sold out before the sessions were announced so next year, it will move to a larger venue.

- HTML5, last year’s hot topic, was pronounced dead more than once.

- Michael Kay announced (and demo’d) Saxon Client Edition that allows you to run XSLT 2 on the browser. Very cool. Saxon CE is in alpha but available for testing at www.saxonica.com.

- JSON seems to be hot this year. I should probably spend some time learning it, especially since I am planning to use it in the CMS we develop at Condesign.

- George Bina from SyncRO Soft Ltd, the company that makes Oxygen, presented some ideas regarding advanced XML development. While Oxygen is at the centre of many of these, his point was that there should be a standardised way to do it all. Dave Pawson suggested expanding XML catalog files for the job via Twitter, an idea I find plausible.

- Murata Makoto, a personal hero of mine thanks to his work with Relax NG, presented EPUB3. What those of us who were there will remember, however, is his introduction, expressing his grief over the on-going catastrophe in Japan.

See www.xmlprague.cz for more.

XML Prague 2011, Part Two

This year, my paper wasn’t accepted for XML Prague. I guess I’ll just have to go there anyway.

XML Prague 2011

XML Prague 2011 will take place on March 26th & 27th. I’m so going to be there.

Back from XML Prague

I’m back home from XML Prague. It’s been a fabulous weekend with many interesting talks and lots of good ideas, and I’m still trying to sort my impressions. So many things I want to try, so many technologies I want to learn. The feedback from my talk on Film Markup Language alone is enough to keep me busy for a few weeks.

More later, but for now, suffice to say that I’m already thinking of a subject for a presentation next year.

It’s Quite Possible to Lose Your Way in Prague

I drove to Prague for XML Prague, yesterday. I left Göteborg on Wednesday evening, taking the ferry to Kiel, and then spent most of Thursday on the Autobahn. It all went without a hitch; not that I’m that good but my GPS is. I would probably have ended up in Poland without it because I often miss the road signs when on my own. Some of my business trips before the GPS era were truly memorable.

So today I took a walk around central Prague, shopping gifts and seeing the sights. And a wonderful city it is, one of my favourite cities in Europe. All that history, all that architecture, the bridges… and small, narrow streets that are never straight. They are practically organic (and probably feed from the gift shops since they are everywhere), and it’s very difficult to find your way. It’s a labyrinth we are talking about.

Yes, I lost my way. The third time I came back to that innocent-looking Kodak shop (and there are a lot of shops with Kodak signs in central Prague, I might add), I knew I was in trouble. I was walking in circles, my feet aching while a particularly wet mixture of snow and rain poured down, and had no idea where I was. And I kept thinking about my GPS, safely tucked away back in my hotel room, remembering that I actually considered bringing it along for the walk but then shrugging, thinking “how hard can it be?”

I found a shelter in a mall I hadn’t seen before (well, I think I hadn’t seen it before) and considered my next move while high-heeled ladies tried lipsticks and wondered what the out-of-place stranger was doing in the cosmetics department. I could ask someone, I suppose, some friendly local…

Then I remembered: I have a GPS in my mobile. It took a few minutes for it to find the satellites it required but after that, I only had to walk for a few more minutes to find a familiar landmark. In a counter-intuitive direction, I might add.

The wisdom in this story? Thank goodness for GPS devices. Oh, and XML Prague starts tomorrow morning.

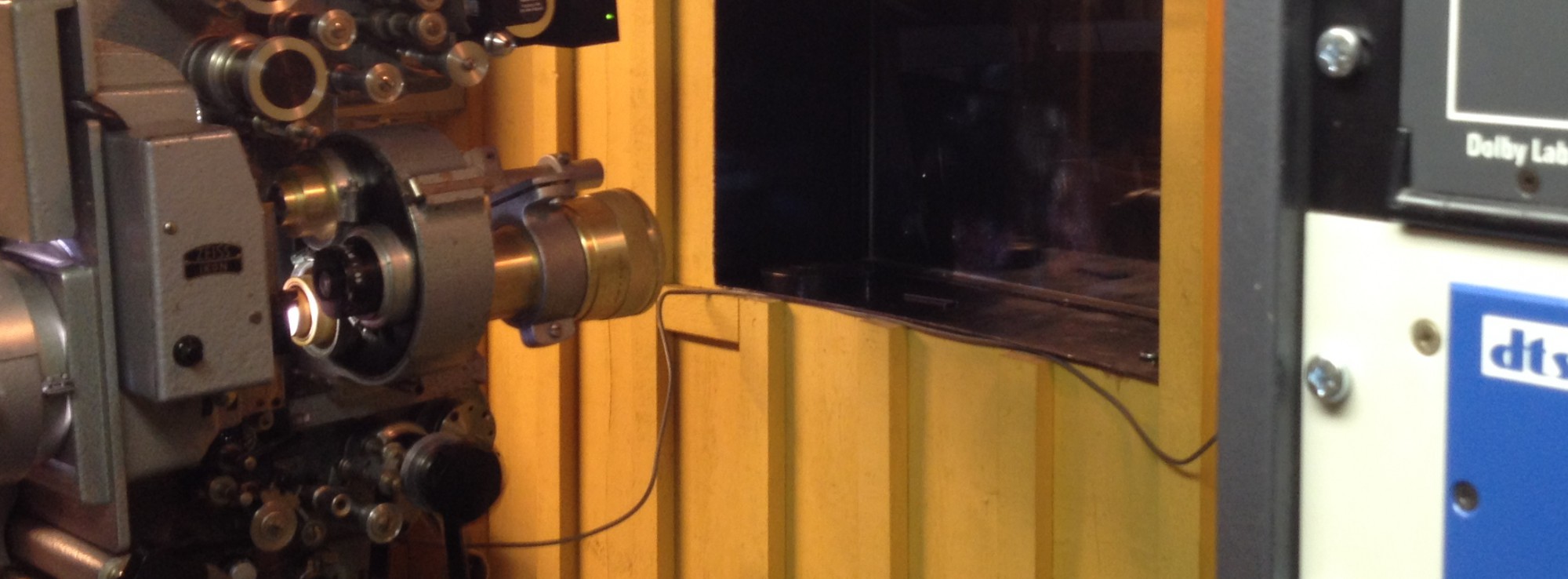

Automating Cinemas at XML Prague

I’ve been busy writing my presentation and some example XML documents for my presentation on Automating Cinemas Using XML at XML Prague in about a week and a half. I’m slightly biased, I know, but I think the presentation actually does make a good case for XML-based automation of cinemas. I know how primitive today’s automation is, in spite of the many technological advances, and I know where to improve it. The question I’m pondering right now is how to explain the key points to a bunch of XML people who’ve probably never seen a projection booth, and do it in twenty minutes.

The opposite holds true, of course, if I ever want to sell my ideas to theatre owners. They know enough about the technology (I hope) but how on earth will I be able to explain what XML is?

There’s still have stuff to do (for one, it would be nice to finish the XSLT conversions required and be able to demonstrate those, live, at the conference) but the presentation itself is practically finished and the DTD and example documents are coming along nicely. I suppose I need to update the whitepaper accordingly and publish it here, when I’m done.

See you at XML Prague!

XML Prague 2010

I’m proud to inform you that my little something on Film Markup Language has been accepted at XML Prague. The conference will take place on March 13-14.